Senior Environment/Technical Artist Portfolio

Christopher Green

Technical Art

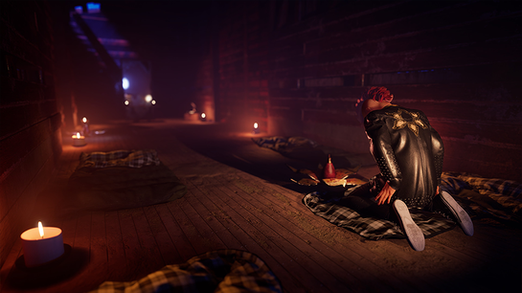

Brush Stroked Normals in Substance Painter for The Killing Stone

Early in the game's development as we were experimenting with different artistic styles we came across a few articles where artists were using manually painted world space normals to embed brush stroke details into the texture. What really appealed to us was that is worked seamlessly with regular Unreal lighting unlike some other more post process heavy solutions. Our main concern was that the burden of manually painting each model would drive the texturing cost up so I set about doing some prototyping.

Our initial solution was to use the tile sampler node in Substance Designer to scatter the brush strokes. The main problem here was that we needed a way to guide the brush strokes to follow the surface, and correct for UV island orientation. We also had a problem with the brush strokes bleeding across UV islands as the strokes were scattered on the texture without a notion of the 3D geometry. It was also very processing heavy, which slowed down iteration time in Painter.

-

Guiding - We created a comb map setup in painter so the artists could paint the surface to effectively groom a utility map of arrows with full artistic control.

-

Bleeding / Processing - We shifted the brush strokes to only affect a position swatch which was then pushed into one of the user channels. The position map would then be used in a filter that could quickly process any number of channels to apply the brush strokes uniformly. Using painters anchor point system we placed an anchor point just prior to the brush stroke filtering enabling us to restore any areas where the brush strokes were bleeding incorrectly quickly and efficiently.

-

Artistic Touch - Layering allowed manual painting to be layered on top, allowing for easy cleanup and full artist control.

Early in development we were exporting the resulting world space textures to be baked into tangent space for use in engine as a separate step. Eventually we found an excellent world to tangent space converter that could be layered on top, speeding up iteration time.

The result was very artist friendly as they could employ any modelling and baking processes that they desired. We found with certain cloth patterns and character details required a slightly different setup but one that the process handily supported. In these cases it made more sense to do simple coloring with patterns as a base layer, applying the brush stroke filtering to a baked light and curvature layer applied as an overlay. The normal map and slight overlay gave the strong sense of brush painting without destroying the finer details. We could also apply the brush strokes to a noise texture and overlay it at a low opacity to give a slightly mottled look.

Materials of The Killing Stone

Materials for the Killing Stone were developed from the start to be iteration friendly for the entirety of the layout/modeling process. Often later in the process we added specific effects to ground each element and pull things together. For example most outdoor geometry needed z axis snow to match with the snowy terrain.

Early in the process we adopted a strategy we had used in previous titles, setting up individual material instances for each model based on an automated script. Using this "Previs" material we would have basic color and material controls, a nice editor grid, and the ability to select all temp geometry in the map based on the material association. Once the model was finished we would simply reparent it to the appropriate Parent Material.

As development continued we diversified the materials with other options and a greater range of versions while still keeping them as artist friendly as possible. For example, the Architecture material had better tinting controls as well as supported a system we called the decay system. This meant that any architecture asset could have decay, wetness and frost applied to it, revealed by gameplay driven global parameters.

These are some of the materials I authored and managed:

-

PBR Parent - Main material for game

-

Mainly needed for basic Diffuse, Normal, and Mask inputs. Later added toggles with procedural masking for wetness and snow.

-

-

Architecture - Main architecture material

-

Setup as a counter point to Primary Parent to support wider use with the architecture.

-

Able to tint and diffuse the base textures for better integration in more dilapidated areas.

-

Global parameters could layer decay, wetness, and frost to match game progression.

-

-

Previs - Basic initial material for most materials

-

Basic color and other material controls.

-

World space grid for easy layout work.

-

Custom Data individual tinting support

-

-

Windows - Frosted glass for all of the exterior facing windows

-

Basic frosted glass with the option of an additional fake HDR to boost reflections

-

Frosting and snow accumulation could be applied based on vertex colors from Houdini. This allowed for accumulation based on whole vertical window, individual panes, and around the frames.

-

Dynamic cracking by offsetting the texture and masking the crack effect using a pane mask texture. Each window would offset the texture's values effectively randomizing which ones would be cracked and to what degree.

-

-

Eyes - Eye material used for the characters

-

Needed the concave shape of the cornea to better catch the specular highlights.

-

Fake HDR reflection to increase the perception of "wetness."

-

Parallax to give the iris and pupil a bit more perceived depth.

-

Eye dilation applied to the UVs of the diffuse so each character could have a little bit of differentiation beyond their basic color changes.

-

Kids Fortifications in South Park: Snow Day!

In South Park: Snow Day! it was very important to us that the fortifications that the kids were building as part of their game felt cobbled together very quickly in a slapdash way. I started prototyping a way for us to use PDG in Houdini as a way of ingesting very rough block in models and spit out polished models that were ready for texturing.

The process looked something like this:

-

Model a basic layout

-

Add long thin blocks that would be processed and become wood planks

-

Add basic intersecting planes anywhere that we wanted loops of rope

-

Add any other details, such as cardboard, nails or other such elements. These needed to be modeled as desired and have a basic UV layout.

-

-

Ingest into Houdini PDG graph

-

Houdini would go over a preset directory and pull in any files.

-

It would then filter those based on file type and then pair them based on naming convention, combining them into work items.

-

-

Process the work items

-

Temp Mesh - Basic modelling work

-

Converted blocks to wood and cards to rope loops via their own HDA subgraphs

-

Colored each section for ID

-

Processed the combined mesh through a RizomUV processor for high quality packed UVs

-

-

Bake Mesh - Setup a copy of the temp mesh for use in Substance Painter with necessary naming and values so painter would populate textures into their appropriate slots based on naming convention.

-

Asset Mesh - Setup a cleaned copy of the temp mesh for use in engine.

-

Texture Baking - Baked basic textures, as well as an ID texture and random element texture for randomizing the tinting of wood and other pieces.

-

Substance Designer Utilities for Question

Over the course of my time at Question Games I built out various utilities in Substance Designer that were employed over each of its projects. Most were simple utilities to make work easier and more streamlined but some helped to define the way we produced artwork.

-

Stylized Edgewear - Using the standard we piped in a variety of our own more stylized noise patterns. This helped to cutdown on the photoreal quality while still fulfilling its intended use. We also coupled this with painted weighting inspired by Arkane's work on Deathloop so we could paint in and out extra wear for better control.

-

Gradient Mapper - This utility was meant to ease the process of introducing color variation into a texture. The artist inputs whatever texture they want and then selects for a number of prebuilt gradient maps that are dynamically mapped to the input. With controls for tinting, value chaos as well as others, they can freely mix the colors around while still getting a cohesive result that can then be layered over whatever they like. This was particularly useful for taking random color bakes and pulling them in to randomize pieces of wood and other such natural materials.

-

Stylizer - This was a simple way to introduce a little bit of baked detail into the base color. It uses a lot of the standard tricks used in stylized art like using curvature edges, deepening ambient occlusion or adding fake top down lighting. This was particularly useful when applied as a filter as it could take a somewhat photoreal source and with a little intermediate blending make a nicely stylized result for texturing.

-

Signed Distance Generator - SDF textures are very useful in a lot of applications and we began experimenting with them for UI and certain VFX applications. This generator simply creates an approximate SDF for any input texture.

-

Templates - An often underused part of Substance Designer in my opinion is the ability to define a template. This can be incredibly useful for pre-making some of the tedious markup that can be so useful for a clean and readable graph. We also experimented with dynamically mutating templates using the Substance Toolkit for a time which had a lot of potential but was agreed to not be necessary for our direction for the game.